membase mcp server

Description

Membase is the first decentralized memory layer for AI agents, powered by Unibase. It provides secure, persistent storage for conversation history, interaction records, and knowledge — ensuring agent continuity, personalization, and traceability.

The Membase-MCP Server enables seamless integration with the Membase protocol, allowing agents to upload and retrieve memory from the Unibase DA network for decentralized, verifiable storage.

Functions

Messages or memoiries can be visit at: https://testnet.hub.membase.io/

- get_conversation_id: Get the current conversation id.

- switch_conversation: Switch to a different conversation.

- save_message: Save a message/memory into the current conversation.

- get_messages: Get the last n messages from the current conversation.

Installation

git clone https://github.com/unibaseio/membase-mcp.git

cd membase-mcp

uv run src/membase_mcp/server.py

Environment variables

- MEMBASE_ACCOUNT: your account to upload

- MEMBASE_CONVERSATION_ID: your conversation id, should be unique, will preload its history

- MEMBASE_ID: your instance id

Configuration on Claude/Windsurf/Cursor/Cline

{

"mcpServers": {

"membase": {

"command": "uv",

"args": [

"--directory",

"path/to/membase-mcp",

"run",

"src/membase_mcp/server.py"

],

"env": {

"MEMBASE_ACCOUNT": "your account, 0x...",

"MEMBASE_CONVERSATION_ID": "your conversation id, should be unique",

"MEMBASE_ID": "your sub account, any string"

}

}

}

}

Usage

call functions in llm chat

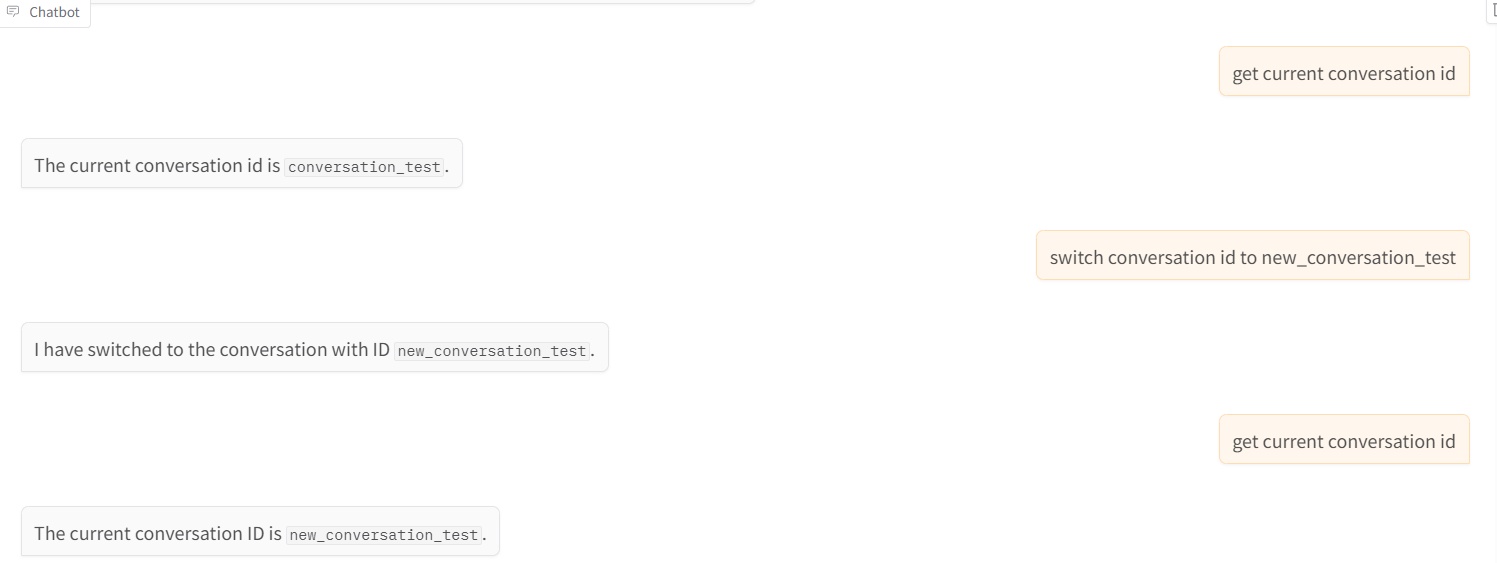

- get conversation id and switch conversation

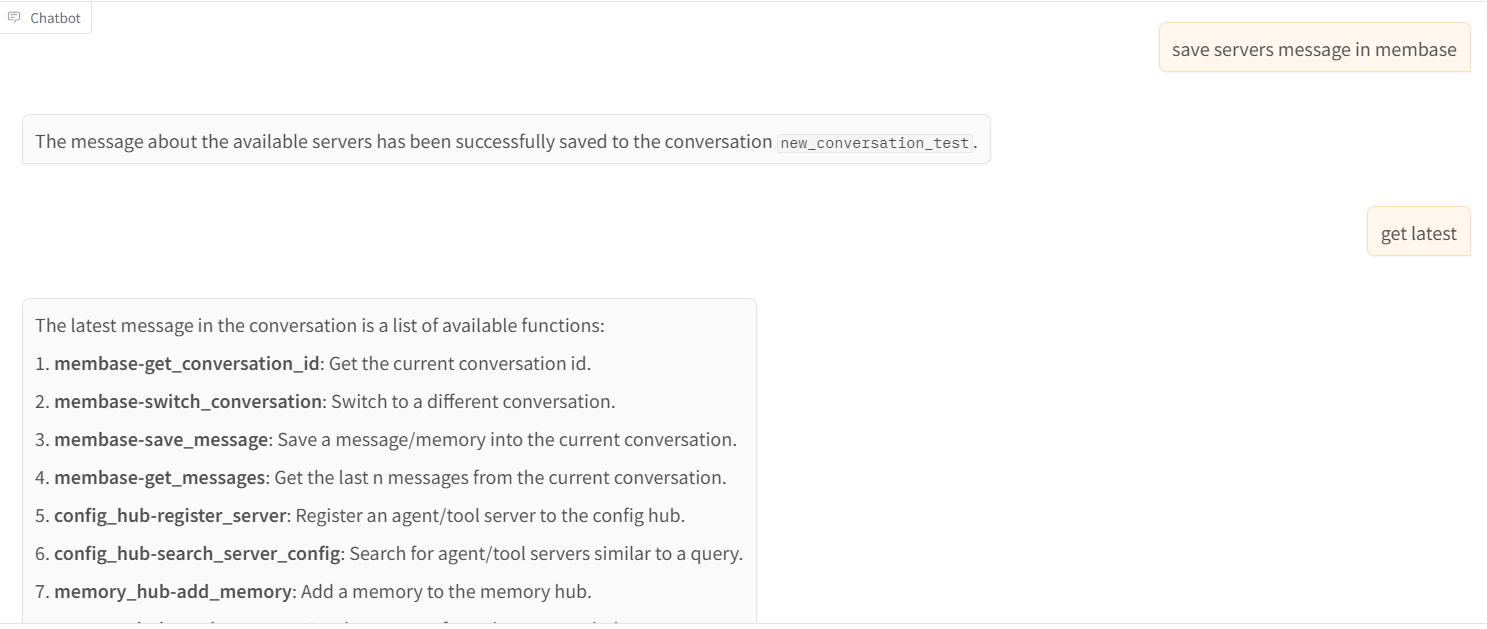

- save message and get messages

Recommend MCP Servers 💡

valkey-ai-tasks

A task management system that implements the Model Context Protocol (MCP) for seamless integration with agentic AI tools, allowing AI agents to create, manage, and track tasks within plans using Valkey as the persistence layer.

deepwiki

Official DeepWiki MCP server providing wiki-related tools via SSE and Streamable protocols.

conductor-mcp

An MCP server that enables AI agents to interact with Orkes Conductor for workflow creation, execution, and analysis.

postralai/masquerade

A privacy firewall for LLMs that redacts sensitive data from PDFs before sending to AI models

@gannonh/memento-mcp

A scalable, high-performance knowledge graph memory system for LLMs with semantic retrieval, contextual recall, and temporal awareness, using Neo4j as its storage backend.

@notionhq/notion-mcp-server

Official MCP Server for Notion API integration, enabling AI agents to interact with Notion content