Model Context Protocol - with PagerDuty

This is an MCP server has integration with PagerDuty. The integration supports basic queries like

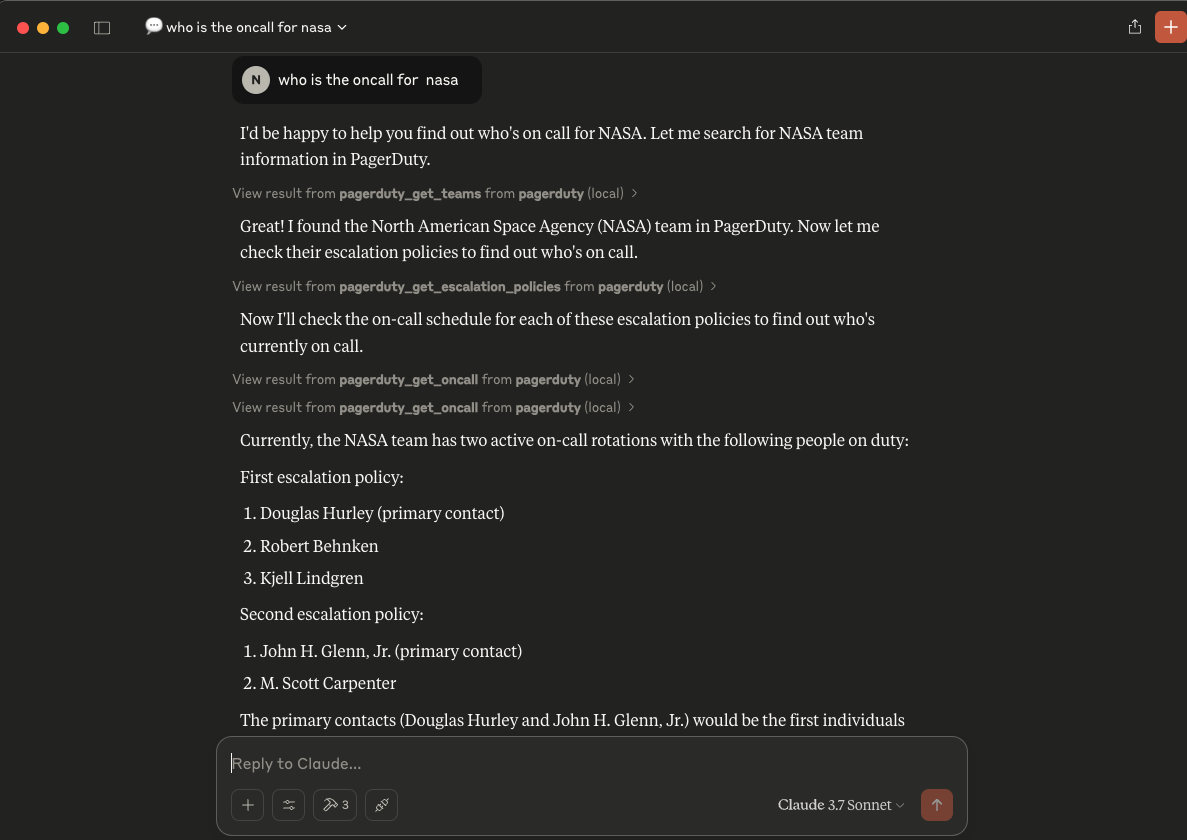

"who is oncall for NASA team right now ?"

The server can be happily integrated with Claude. With few simpel steps

Integration with PD

You should update the token, just run

export PAGERDUTY_API_KEY=your_api_key_here

Integration with Claude

First, make sure you have Claude for Desktop installed. You can install the latest version here.

We’ll need to configure Claude for Desktop for whichever MCP servers you want to use. To do this, open your Claude for Desktop App configuration at

~/Library/Application Support/Claude/claude_desktop_config.json

in a text editor. Make sure to create the file if it doesn’t exist. Refer here for more. Updated the config with below entry

{

"mcpServers": {

"weather": {

"command": "uv",

"args": [

"--directory",

"/ABSOLUTE/PATH/TO/PARENT/FOLDER/server/pagerduty",

"run",

"pagerduty.py"

]

}

}

}

You may need to put the full path to the uv executable in the command field. You can get this by running which uv on MacOS/Linux

Configuration Testing

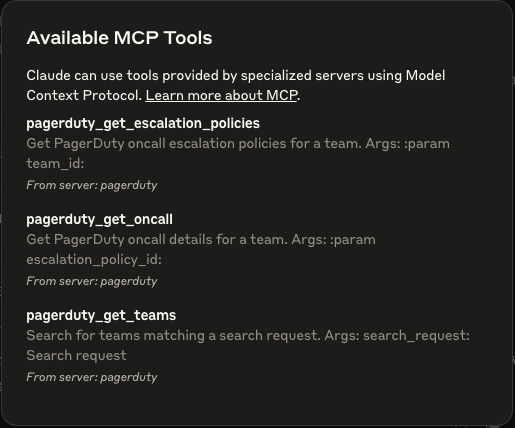

At the end, once you've configured Claude. Select the tools button, to verify 3 MCP tools are available.

- The prompt should show something as below:

Demo

Recommend MCP Servers 💡

@gannonh/firebase-mcp

Model Context Protocol (MCP) server for Firebase, enabling AI assistants to work with Firestore, Storage, and Authentication services.

radostkali/gitlab-mcp-server

GitLab integration MCP server built with FastMCP in Python.

blue-bridge

An MCP server that provides sample prompts and recipes for querying and managing Azure resources, including Grafana, Data Explorer, Resource Graph, and Resource Manager, with zero-secret authentication.

azure-mcp

The Azure MCP Server, bringing the power of Azure to your agents.

@edgee/mcp-server-edgee

An MCP Server for the Edgee API, providing tools for organization, project, component, and user management through the Model Context Protocol.

supabase-mcp-server

An open-source MCP server for Supabase that enables end-to-end management of Supabase via chat interface, supporting SQL query execution, Management API, Auth Admin SDK, and automatic migration versioning with built-in safety controls.